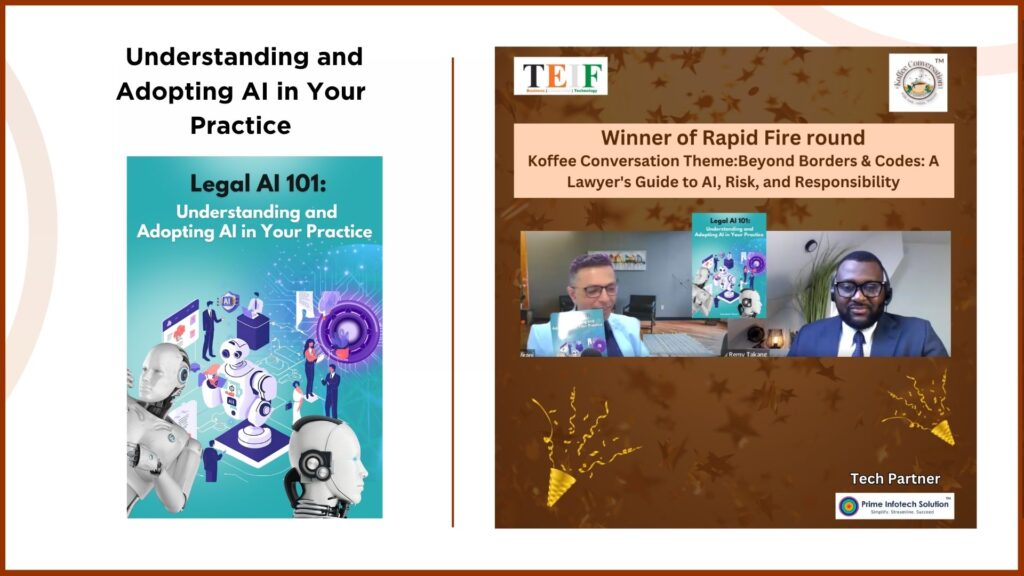

Remy Takang’s journey is a powerful reflection of global resilience meeting future-focused expertise. Operating at the intersection of law, artificial intelligence, data protection, and governance, he brings a rare cross-continental perspective shaped by experiences across Africa, Europe, and global advisory ecosystems. As Legal Counsel and Head at Ohadesk, Remy’s work is driven by one core belief—technology must move forward with clarity, responsibility, and trust.

In this episode of The Koffee Conversation, Remy breaks down the complexity surrounding AI into something refreshingly simple—clarity is the new currency. His insights go beyond legal frameworks to address the human side of innovation, emphasizing that speed without direction creates risk, while thoughtful governance builds sustainable progress. This conversation is a reality check for leaders navigating AI adoption in a fast-evolving world.

Remy’s career journey began in Cameroon, where he practiced law before making a pivotal decision to pursue global education during a period of socio-political instability. Moving to Belgium, he strategically layered his expertise—starting with international business law, then intellectual property and ICT law, followed by globalization and development. Each step was intentional, aligning his growth with the changing needs of the world.

As technology rapidly evolved, Remy transitioned into AI governance, risk, and compliance—recognizing that the future of law lies in guiding innovation responsibly. Today, his work focuses on helping organizations adopt AI with structured governance, ethical clarity, and operational readiness—ensuring that technology serves people, not the other way around.

Key Highlights of the Koffee Conversation with Remy Takang

- Clarity is essential for effective legal advisory in complex AI ecosystems

- Speed in innovation must be balanced with governance “guardrails”

- Trust and transparency form the foundation of sustainable tech-driven businesses

- Compliance should be practiced as a culture, not treated as a checkbox

- AI adoption fails when organizations do not define their core problem first

- Internal governance structures must be established before deploying AI systems

- Accountability frameworks are critical for managing AI-related risks

- Training teams is essential to prevent data misuse and operational gaps

- Global AI regulations differ based on regional values and legal traditions

- Human judgment must always remain central despite AI advancements

- Lawyers must develop technical understanding and strong prompting skills

- Due diligence is non-negotiable when using AI-generated outputs

- Fear of missing out (FOMO) drives poor AI adoption decisions

- Continuous learning and research are key to staying relevant in evolving fields

- Leadership in AI requires ethical thinking, critical analysis, and adaptability

▶️ Watch the full episode on YouTube to explore how clarity, responsibility, and human judgment can shape the future of AI, law, and global governance.

0 Comments